Artificial intelligence (AI) is at an inflection point in the field of healthcare. 50 years of algorithm and software development have produced some powerful methods for extracting patterns from big data.For example, deep learning neural networks have been shown to be effective for image analysis, resulting in the first FDA-approved Artificial intelligence assisted diagnosis An eye disease called diabetic retinopathy uses only pictures of the patient’s eyes.

However, the application of artificial intelligence in the healthcare field has also exposed many of its weaknesses, as outlined in Recent guidance documents From the World Health Organization (WHO). The document covers a lengthy list of topics, each of which is as important as the last one: responsible, responsible, inclusive, fair, ethical, fair, responsive, sustainable, transparent, trustworthy and explainable artificial intelligence. These are essential to providing medical care and are consistent with the way we treat medicine when the patient’s best interests are paramount.

It is not difficult to understand how algorithms can be biased, exclusive, unfair or unethical. This may be because its developers may not give it the ability to distinguish between good data and bad data, or they are not aware of data problems, because these problems usually come from discriminatory human behavior. An example is the unconscious classification of emergency room patients based on skin color. Algorithms are good at exploiting these biases and making them aware that they can be challenging. As suggested by the World Health Organization’s guidance document, we must carefully weigh the risks and potential benefits of artificial intelligence.

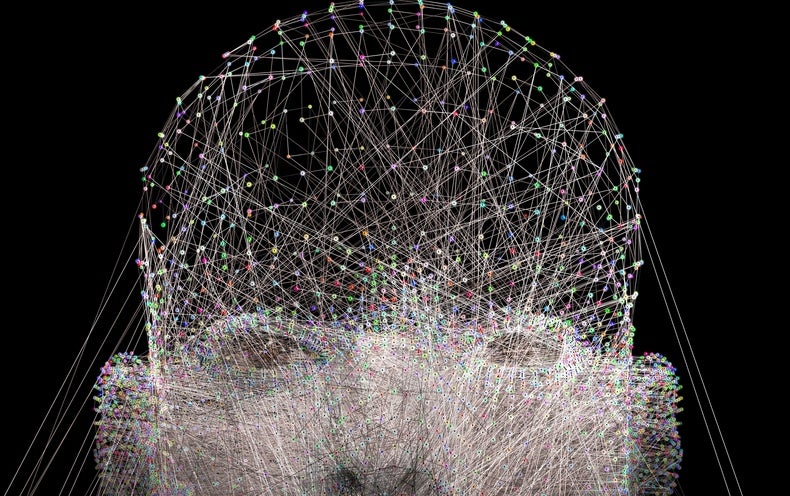

But what is more difficult to understand is why artificial intelligence algorithms may be opaque, credible, and interpretable. Transparency means that it is easy to understand the artificial intelligence algorithm, how it works, and the computer code that performs the work behind the scenes. In addition to rigorous verification, this transparency also establishes trust in the software, which is essential for patient care. Unfortunately, most of the artificial intelligence software used in the healthcare industry comes from commercial entities that need to protect intellectual property rights, so they are reluctant to divulge their algorithms and codes. This may lead to a lack of trust in artificial intelligence and its work.

Of course, trust and transparency are valuable goals. But what about the explanation? One of the best ways to understand what AI or AI aspires to be is to think about how humans solve healthcare challenges and make decisions. When faced with challenging patients, it is common to consult other clinicians. This makes use of their knowledge and experience base. One of the benefits of consulting humans is that we can follow up the answer with the question of why.

“Why Do you think this treatment is the best practice? “

“Why Do you recommend this app? “

A good clinical consultant should be able to explain Why They came up with a special suggestion. Unfortunately, modern artificial intelligence algorithms rarely answer the question of why they think the answer is good. This is another dimension of trust that can help solve prejudice and ethical issues, and it can also help clinicians learn from artificial intelligence, just like they would from human consultants.

How do we get explainable artificial intelligence? Interestingly, one of the first successful artificial intelligence algorithms in the healthcare field was the MYCIN program developed by doctor and computer scientist Edward Shortliffe in the early 1970s to prescribe antibiotics for patients in the intensive care unit. MYCIN is a kind of artificial intelligence called an expert system, which can answer “why?” by going back to its probability calculation to tell the user how it came to the answer.

This is an important advancement in artificial intelligence, and we seem to have lost it when looking for better-performing algorithms. If the developers of the algorithm really understand how it works, then interpretable AI should be possible. It only takes time and effort to track the algorithm iterations and present the path taken by the user in a human-understandable form.

In other words, this should only be a priority for developers. Any AI algorithm that is so complex that developers cannot understand how it works may not be a good candidate for healthcare.

We have made tremendous progress in the field of artificial intelligence. We are all very excited about how artificial intelligence can help patients.We are also humbled by the failure of artificial intelligence, such as A recent study It shows that the AI results used to diagnose COVID-19 are unreliable due to data bias. When developing and evaluating AI algorithms for clinical use, we must keep clinicians in mind. We must constantly think about what is good for patients and how to use the software to gain the trust of clinicians. AI’s ability to explain itself will be the key.

This is an opinion and analysis article; opinions expressed Author or author Not necessarily those Scientific american.